On the Edge #29

This week I’ll talk about some papers which are drawing paths for the design of new AI systems. Continuing the trend of data-set scaling vs. parameter scaling (OtE #26 🔗)

Before that, we’ll talk about two metrics used in image processing called shape bias and texture bias. Shape bias indicates the tendency to prioritize the shapes and contours of objects in an image which is particularly important for recognizing objects and understanding their spatial relationships to one another. For example, when viewing an image of a cat, the shape bias would help to detect the overall outline of the cat from the background and other objects in the image.

On the other hand texture bias, indicates the tendency to prioritize the fine details and surface features of objects in an image which helps to distinguish between different textures, such as the roughness of a tree bark versus the smoothness of a metal surface.

Now Google introduced ViT, an image model, that scales up the amount of pre-training dumped into transformer-architecture models leading to systems that display human-like qualities 🔗 . The results of this scaling have been compared with humans (and previous works) in terms of shape bias and texture bias.

The last bars (green, blue, red) are the recent result, and you can see how ViT models are closer to human-level compared to previous models. It’s still not human why it’s important?

There’s a pattern here. Recently transformers have shown that they allow for emergent complexity at scale, where pre-training leads to systems that arrive at humanlike performance qualities, of course, if we pre-train them enough.

This is not a scientific approach but a design approach.

In OtE #20 🔗 we mentioned RT-1 as the “GPT of robotics.” Now Google and Technische the University of Berlin have introduced PaLM-E 🔗 where they’ve incorporated multimodality to design a more general system. PaLM-E is actually a merge of PaLM (Google’s language model) with ViT and the low-level policies are from RT-1. They found out that “using PaLM and ViT pre-training together with the full mixture of robotics and general visual-language data provides a significant performance increase compared to only training on the respective in-domain data.” You might remember that in OtE #26 🔗 we speculated that:

a large multimodal reinforcement learning model from DeepMind (an order of magnitude larger than Gato.)

Gato was also a multimodal AI with 1.17B parameters whereas PaLM-E has 562B parameters.

Microsoft also introduced Kosmos-1 🔗 which exploits multimodality “to perceive both language and embedding learn in context, reason, and generate. “

Multimodal AI systems use various techniques, including NLP, computer vision, and speech recognition, to analyze and understand the different modalities of data. And now with the new design paradigm for model architecture and pre-training approaches, multimodality is emerging as a new piece of information for model enrichment. This is a good survey paper on generative AI that also covers multimodality 🔗

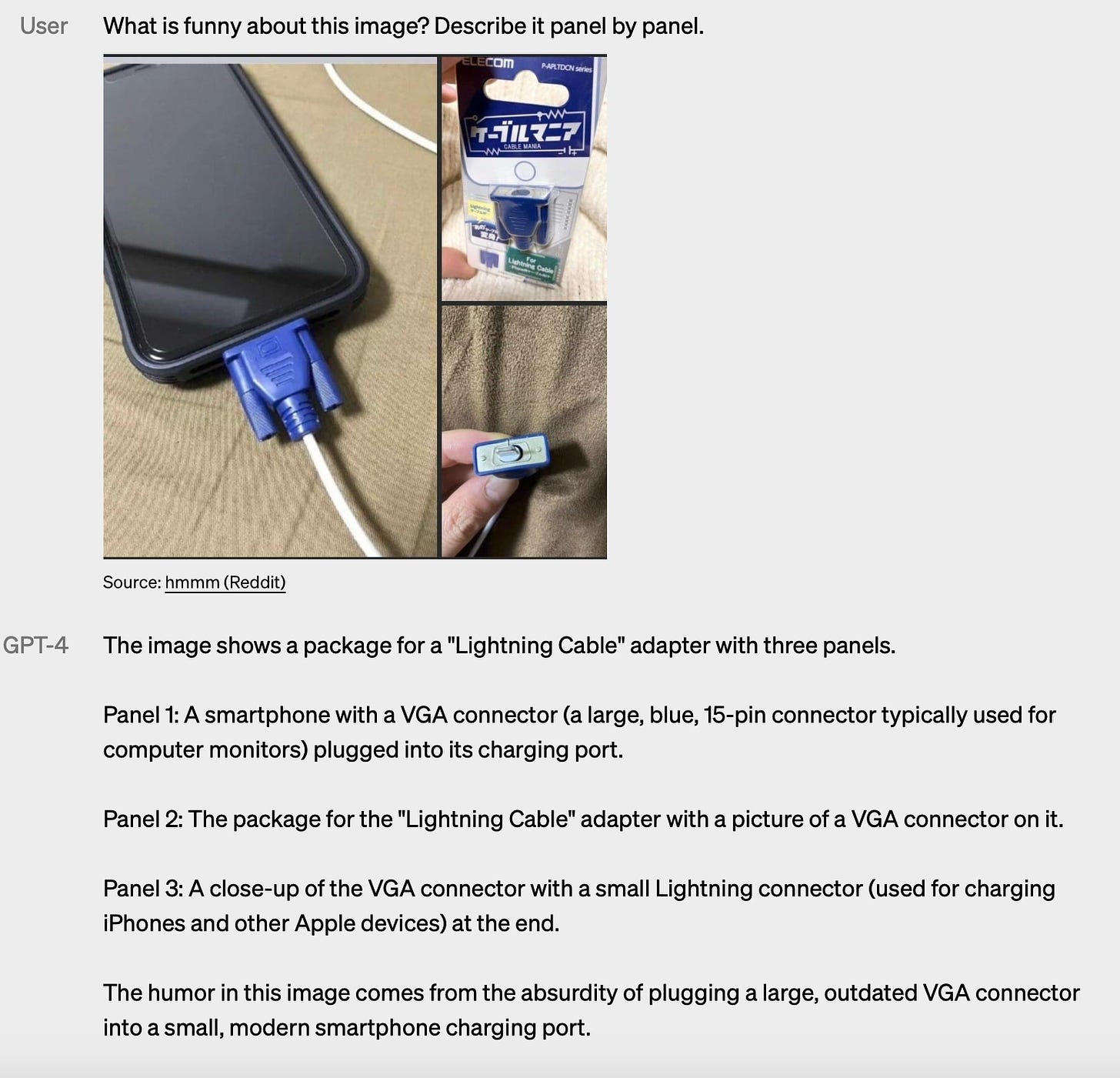

And now it might not surprise us to know that GPT-4, which is released, is using multimodality. It means now it understands images as an interaction tool. It might be a question that for example, Dall-E had the ability to understand images, how is this different? Below is an example of a task that distinguishes how GPT-4’s understanding is deeper than Dall-E's.

It’s also open for startups to use these technologies to attack hard problems. For example Unitary 🔗 uses multimodal AI to bring safety to content generation.

It’s not all. It’s too naive to think that all that’s happening is on transformers and multimodality. This week I saw: 1) MathPromter🔗 that improves mathematical reasoning of LLMs 2) a better optmizer🔗. Optimizers are responsible for updating the weights and biases of a neural network during training in order to minimize the loss function and Adam is the most famous optimizer but Google introduced a new optimizer which has significanly improved optimization on various models and tasks but there’s still a lot of work to be done and 3) we came to better understanding of LLMs while they do in-context learning 🔗. In traditional machine learning, the focus is on training the model on a set of data, and then using the same model to make predictions on new, unseen data. But, in-context learning allows the AI system to continuously learn and adapt based on the context of the data it is processing. For example, if an AI system is trained to identify objects in images, it may struggle to identify a certain object if it has only seen that object from one angle. In-context learning can help the system to better understand the object by incorporating additional data, like the object's location, orientation, or surroundings, into its learning process.

With all this progress, what government would do? We talked about how these technologies are strategic, I saw that FTC has already started 🔗 and reading this note from Tony Blair Institute was interesting 🔗:

Leading actors in the private sector are spending billions of dollars developing such systems so there may only be a few months for policy that will enable domestic firms and our public sector to catch up.

So we can expect that US, UK, or EU jump in to set regulations as fast as they can.

What other companies would do? Amazon already signed a partnership to deploy AI models directly on AWS 🔗 and I expect that Nvidia to sign a big deal in 2023.

What we will do? Don’t believe me, just watch.

These images have been created by Midjourney version 5 :