Less than a month ago, Sam Altman, the CEO of OpenAI, tweeted:

It's a very controversial topic and whoever brings it up gets Sci-Fi and paranoid responses rapidly. My stance on AGI was that: if we look for AGI, we won't find it because we can't define it. Turing test is just one tool. For example, imagine an AGI that knows the Turing test and wants to stay hidden, so it doesn't pass the test "intentionally." I still believe that, but Sam's tweet got me thinking again.

In my opinion, generally, OpenAI's work is getting better and better in enhancing GPT3. While it's been developed in the NLP domain, it has shown promising results in other fields, even image processing. But "generally," I see DeepMind closer to solving general problems in AI with their research. Besides their excellent work in building agents that win games they haven't seen, their work in Alphafold was phenomenal progress in protein structure prediction. No artificial intelligence system has ever solved such an important task before. This was also last year, and I predict DeepMind will revolutionize biology and its branches in coming years. (This is also a vision coming from Alphabet that made the NHS deal happen for DeepMind). OpenAI published a paper about ten days ago in which they have successfully shown an artificial intelligence that's able to solve high school math olympiad problems. But a better work, IMHO, was done a year ago by DeepMind, here. And last but not least, two days ago, DeepMind announced the first deep enforcement learning system that can keep nuclear fusion plasma stable (3 years research). I don't want to compare DeepMind with OpenAI. They're both great companies with outstanding researchers.

A couple of weeks back, I've read a long discussion between one of the top OpenAI scientists Richard Ngo, and Eliezer Yudkowsky about "AI Alignment." AI alignment is a topic where we discuss how much and where AGI decision-making is aligned with human desires. Yudkowsky's works are more philosophical, but when I see the lengthy discussion between these two top scientists doesn't provide a flow of knowledge or information, I get more skeptical about OepnAI's work in AGI. It seems to me.

Last week Ilya Sutskever, OpenAI's chief scientist, tweeted this:

So with that history in my mind, I wasn't surprised. Yan Le Cun, one of the most significant figures in AI and Facebook's Chief AI scientist, replied:

I'm not sure Le Cun is correct, or OpenAI has achieved a new level that nobody knows (which BTW is in contradiction with their philosophy!) I'm seeing OpenAI are on a path where they're doing ground-breaking work. Still, I don't think it's toward AGI but rather specific business ideas, which is not bad. To emphasize, I introduce one of the best curriculums on "AGI Safety" by OpenAI. Elon Musk has stated that their humanoid robots are their most important project in the past month.

This conversation and its timing seem important to me. In my research, I found an institution called Sentience Institute. If we use the word "Sentience" instead of "Conscious," things can be different. This institution has done great work in evaluating sentience in artificial entities. (Go to table 1 in the article Incomplete list of features indicative of sentience in artificial entities). Also, their definition of sentience is worth reading. I also found the AI Alignment forum that brought together bright minds on the topic. My take here was that I'm still on my initial stance.

Interesting links

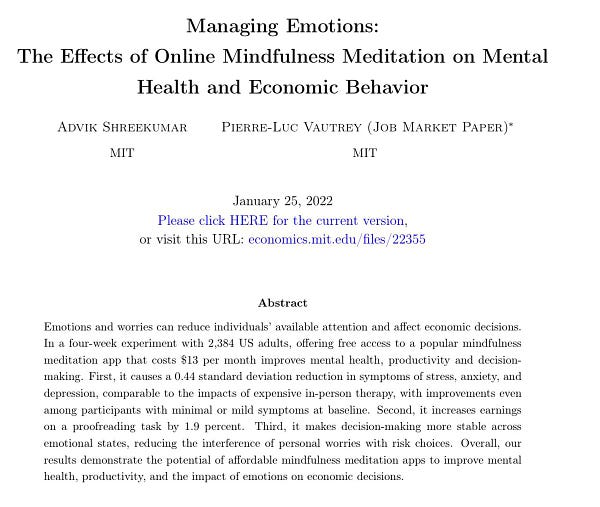

Mindfulness apps really work:

Using data science in history: https://cepr.org/sites/default/files/news/FreeDP_04Feb.pdf (Countries with an increasing number of writers and poets in the 17th century were more likely to have a higher rate of "self-made" women in the 20th century)

City Generator: https://www.townscapergame.com (Also here and here)

I just found out working out has many benefits, but it's not related to losing weight: here and this app uses a heartbeat pattern to change metabolism rate but actually by 23 minutes of work out in a week here and here.

Are you bored? web from the past:

thank you so much for the shared update 🙏 love the way you summarize